Connect 4 RL Agents

The Problem

Traditional game-playing bots rely on hard-coded heuristics that are brittle and difficult to generalize across board states.

The Solution

Built MCTS and Minimax AI agents that play Connect 4 using reinforcement learning. MCTS is a natural fit because Connect 4 has a manageable branching factor (7 columns), so tree search can simulate many games ahead efficiently. Minimax with alpha-beta pruning works well here because the game has a finite, relatively shallow depth (42 moves max), making it feasible to search near-optimal play. Together they provide strong, adaptive agents without hand-crafted evaluation rules.

Results

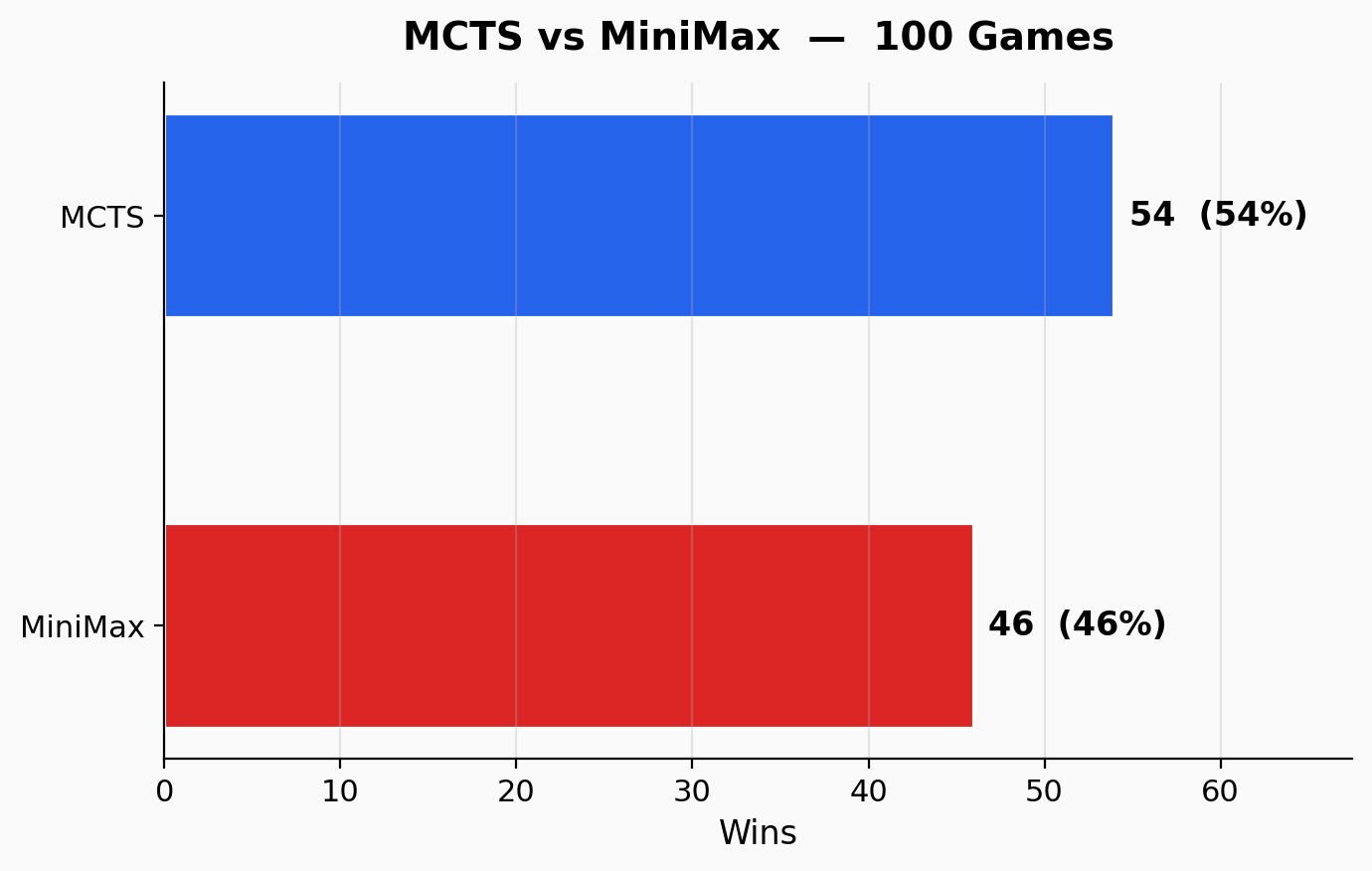

Over 100 games between the two agents, MCTS edged out Minimax with a 54-46 win split, showing both approaches are competitive.

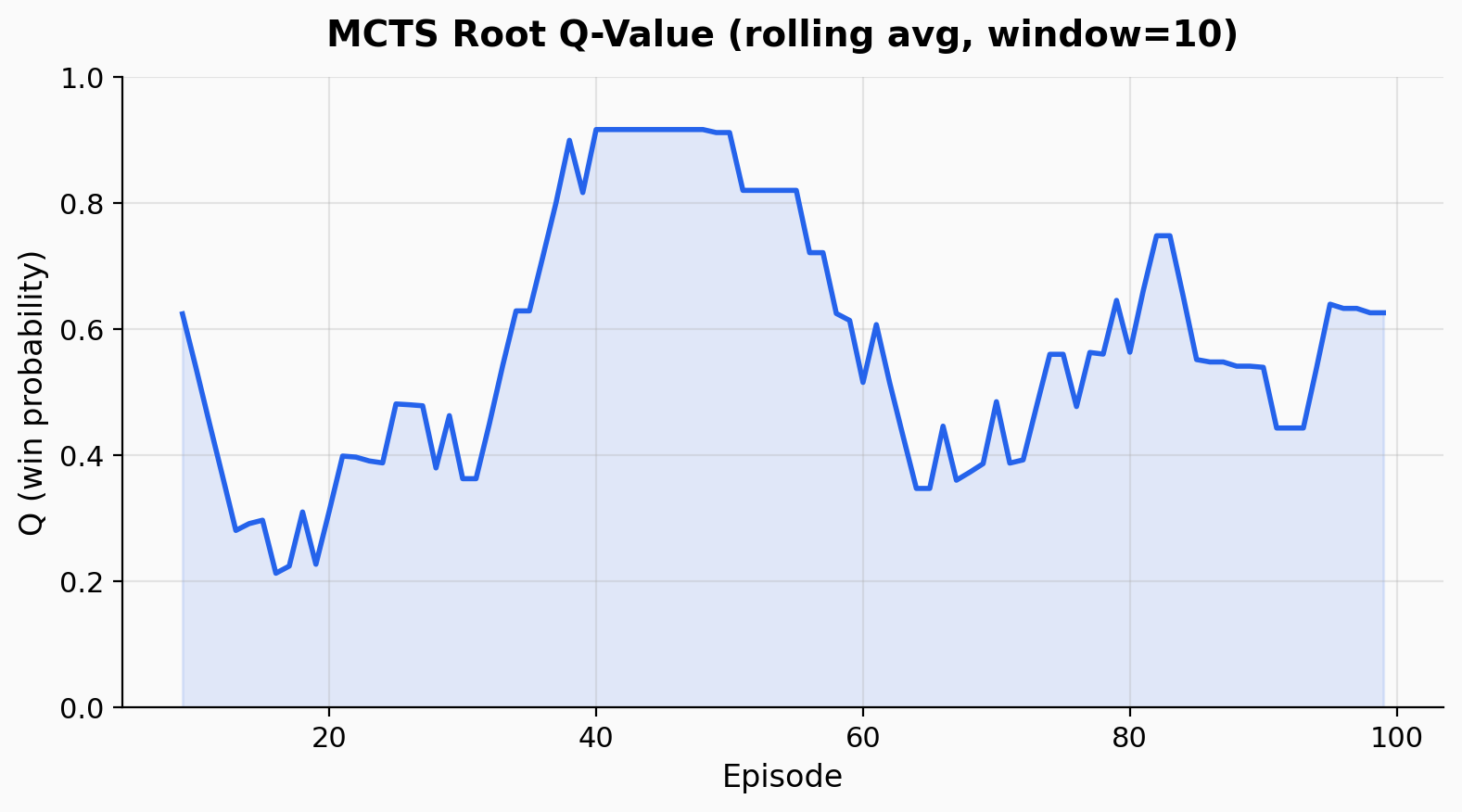

The rolling average of the MCTS root Q-value across episodes shows the agent’s confidence in its position over time. Early episodes are noisy as the tree builds up, but confidence stabilizes in the 0.4-0.6 range.

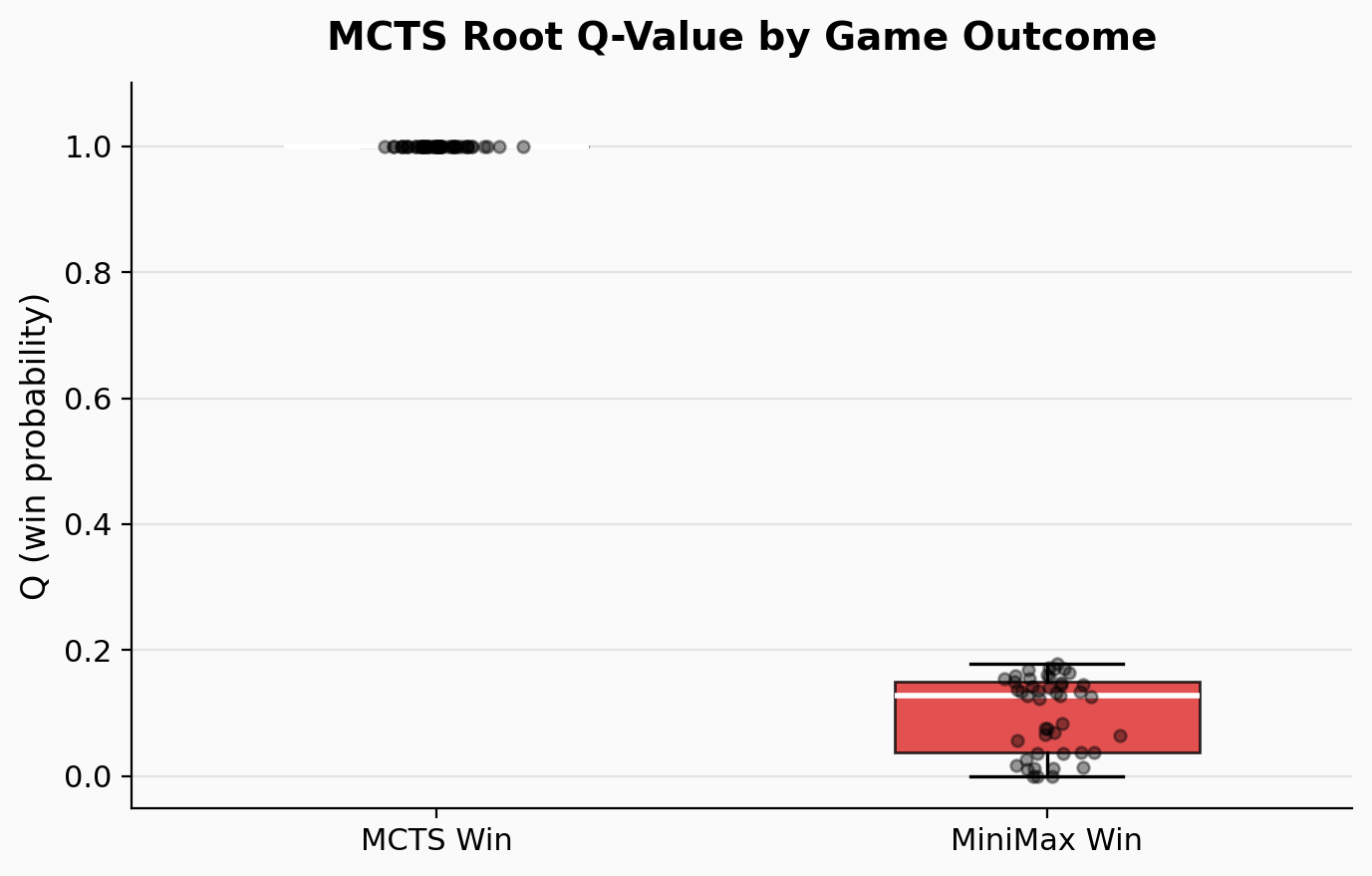

When grouped by game outcome, the MCTS Q-values tell a clear story: games MCTS won have root Q-values clustered near 1.0, while Minimax wins correspond to low Q-values near 0.0-0.2, confirming the agent’s self-evaluation is well-calibrated.